When Students Boo and VCs Cheer: AI's Cultural Split

33K upvotes booed AI-as-industrial-revolution the same week Andreessen pitched a Golden Age. The framing gap is now operational for builders.

On May 8, 2026, a vice president named Gloria Caulfield walked to the podium at the University of Central Florida's spring commencement for the College of Arts and Humanities and the Nicholson School of Communication and Media. She told the graduating class that "the rise of artificial intelligence is the next industrial revolution." The crowd booed. Loudly. Someone yelled, "AI sucks!" Caulfield, visibly stunned, turned with her hands out and said, "Oh, what happened?" When she pivoted to "only a few years ago, AI was not a factor in our lives," the crowd cheered. Three days later, 404 Media's writeup of the moment became the #1 post on r/technology — by margin — at 33,096 upvotes. The same Reddit thread that launched the story registered a meager ~36 points on Hacker News. A roughly 900× engagement gap between the mainstream cultural surface and the developer surface.

Read the full 404 Media report →

In the same 24-hour window, Marc Andreessen sat down with Erik Torenberg on Moment of Zen's sister show MTS for an episode titled "The Golden Age Thesis." The pitch was direct: "narratives around AI, from fear to hype, are influencing public perception, while real-world usage tells a very different story." Andreessen made the case that AI's golden age is here, that the moral panic is a recurrence of the same pattern that greeted electric lighting and the automobile, and that capability expands work rather than eliminating it.

Two simultaneous broadcasts. Two completely different audiences. One is the largest mainstream-Reddit AI story of the quarter. The other is the most polished VC long-form of the week. They are not in conversation with each other — they are operating in separate framing universes. And for anyone shipping consumer-facing AI copy in the next twelve months, the gap between them is the single most actionable piece of cultural intelligence on the table.

The 900× engagement gap is the actual signal

The booing itself is not the news. Commencement speakers get heckled all the time. The news is what the distribution pattern looks like across surfaces.

The story landed first as a clip, then on Slashdot, Kotaku, Boing Boing, Inc., and — notably for cross-political-spectrum reach — Fox News / OutKick. It hit r/technology and stuck at the top of the subreddit's all-time week. It registered as a blip on Hacker News, where the top-comment energy was largely "of course they booed, the speaker was a Tavistock Group VP, this is a UCF politics story." The HN read was contextual and dismissive. The Reddit read was categorical and angry.

This is the pattern that matters. When the same artifact pulls 900× more engagement on a mainstream-cultural surface than on a developer-class surface, the story is no longer about the artifact. It is about which audience is doing the narrative work on AI — and right now the mainstream audience is doing far more of it than the dev audience is.

The data point: r/technology has roughly 17 million subscribers — the population of the Netherlands. Hacker News has roughly 5 million monthly visitors. The 900× gap on a single artifact in a single 24-hour window is not an audience-size effect. It is a salience effect. The booing matters more on Reddit because the booing resonates there. On Hacker News, where most readers ship code with AI assistance every day, "AI is the next industrial revolution" is a yawn, not a flashpoint.

What Andreessen actually argued — and where it lands

The Golden Age Thesis is not new from Andreessen. It is a load-bearing extension of his 2023 "Why AI Will Save the World" essay, sharpened with two years of operator data. The new framing emphasizes three things:

- Real-world usage diverges from public discourse. Enterprise adoption metrics, agent-runtime maturity, and the explosion of "AI-native" startups suggest the on-the-ground story is quieter and more positive than the cable-news story.

- Moral panics are pattern-of-record. Every general-purpose technology since electricity triggered an existential-risk discourse that aged poorly. The implication: the booing is a Luddite tell, not a market signal.

- Capability expands work. The historical pattern is that productivity-multiplier technologies create more demand for adjacent labor, not less.

Each of these points is defensible in isolation. The problem is the audience. Andreessen is presenting them on a Tier-1 VC podcast hosted by a former a16z partner, distributed primarily through Substack and YouTube to an audience of operators, founders, and capital allocators. The same narrative, presented to a UCF arts and humanities graduating class, gets booed off the stage in under twenty seconds.

Watch the full Golden Age Thesis episode →

📖 Want the broader cultural context? We covered the Altman Molotov attack and the rise of "Luigi-ing" CEOs in anti-AI Discords last month — same vector, sharper edge.

The Gallup data backs the booing, not the thesis

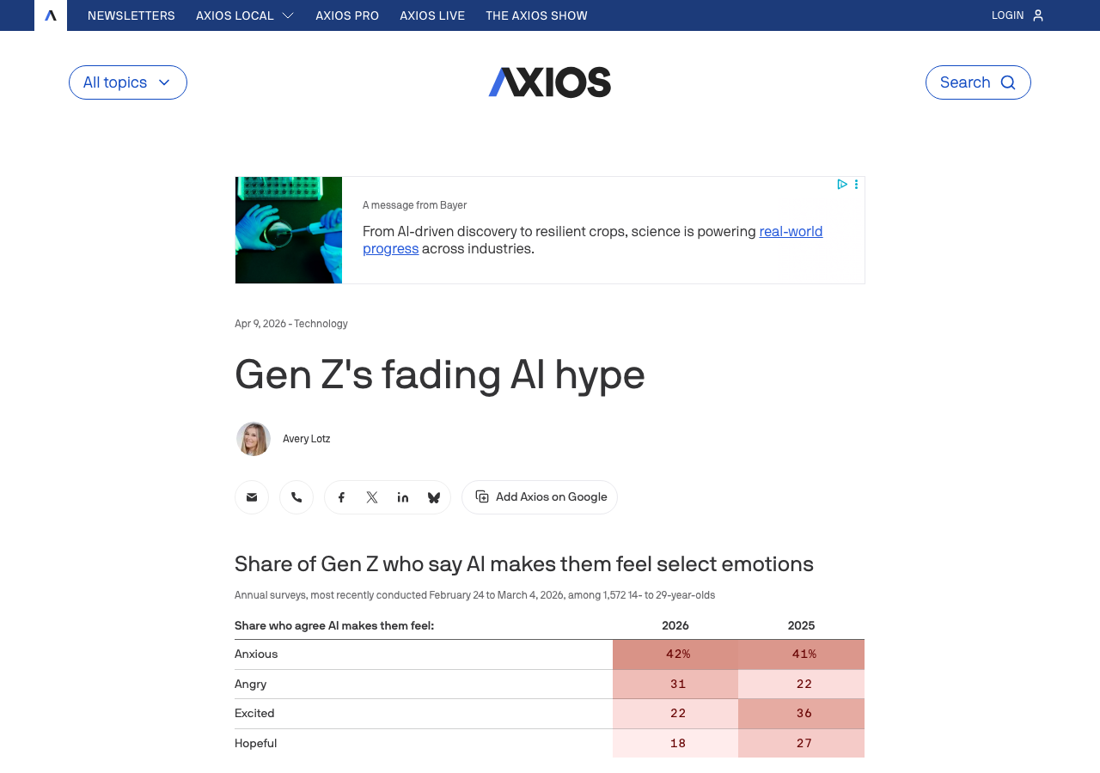

This is not vibes. This is measured. Gallup's 2026 Gen Z poll, released April 9 and widely covered by Axios and U.S. News, shows the cultural-rejection signal hardening on the same demographic that just booed Caulfield:

- Excitement about AI fell from 36% to 22% year-over-year among 14- to 29-year-olds

- 31% report outright anger toward AI, up from 22%

- Hopefulness dropped from 27% to 18%

- 48% of young workers say risks of AI at work outweigh the benefits — up from 37% in 2025

- Less than 3 in 10 trust AI-assisted work, and virtually none trust work done with AI alone

See the Axios writeup of the Gallup poll →

The Gallup numbers are the structural backbone of the booing story. A 26-point year-over-year swing on "AI will do more harm than good for critical thinking" is the kind of movement that shows up in product-market-fit data within two quarters. Marketers who calibrate to last year's "Gen Z is the AI-native generation" framing are already shipping copy that lands wrong.

Why the workforce numbers make the resistance rational

The booing is not a disconnect from the data. It is a response to the data.

Q1 2026 saw more than 45,000 tech jobs eliminated, with AI explicitly cited as the driver in roughly 20% of cuts. Block CEO Jack Dorsey eliminated 4,000 roles — 40% of the company's global workforce — citing "the growing capability of AI tools to perform a wider range of tasks." Oracle ran 20,000–30,000 cuts in April. The Challenger Gray report had AI as the single largest stated reason for cuts in March and April, accounting for over a quarter of all April layoffs.

Read the Challenger Gray layoff analysis →

For a graduating arts and humanities class — exactly the cohort whose career paths in writing, journalism, design, and media production are the most direct casualties of generative AI — the "next industrial revolution" framing reads as the speaker's company taking credit for the demolition of the audience's career trajectory. Of course they booed.

We covered the structural pattern of AI-justified workforce reductions at Meta in detail last quarter. The story is not that AI causes the layoffs. The story is that AI provides the legible justification the layoffs needed.

The contamination vector: AI text is now in the textbooks

Compounding the Gen Z anger is a parallel signal that did not trend on r/technology but did go big on r/singularity: a 4,774-upvote thread documenting ChatGPT-generated content appearing in K-12 and college textbooks. Not student work — the source material itself.

Simon Willison's May 11 link-post on Jason Koebler's "Zombie Internet" essay named the broader pattern: AI-generated text is no longer just on social media or in spam. It is contaminating the baseline materials humans learn from before they encounter AI tools. Willison frames it sharply: "filtering it is mentally exhausting and it's even starting to distort regular human writing styles."

Read Willison's full link-post →

For students who are simultaneously (a) being told their career path is being eliminated by AI, (b) reading textbooks they suspect were written by AI, and (c) watching the same VCs who fund the AI labs collect speaking fees to tell them it's all an industrial revolution — the booing is not irrationality. It is calibration.

Builder takeaway: if your consumer-facing copy still leads with inevitability framings — "the future of work," "the next industrial revolution," "AI is here to stay" — you are writing for the audience that already agrees with you and alienating the much larger audience that has been moving the other way for eighteen months. The Gallup data is the leading indicator. The booing is the lagging indicator. The market response is in front of you.

The framing that actually works in May 2026

We are not arguing against AI. ComputeLeap publishes a half-dozen technical AI tutorials a week. We use the agents we cover. The argument is narrower and more operational: the frames that win on consumer-facing surfaces in May 2026 are the opposite of the frames that win on a16z podcasts.

Here is the operational pattern we are seeing perform:

| What loses (May 2026) | What wins (May 2026) |

|---|---|

| "The next industrial revolution" | "Here is what it actually does, and what it doesn't" |

| "AI will save the world" | "AI is a power tool. Treat it like one." |

| "The future of work is here" | "Some workflows are 10× faster. Others are slower and more error-prone. Here's how to tell." |

| "AI-native" / "AI-first" branding | Specific, testable capability claims with benchmarks |

| Inevitability rhetoric | Trade-off rhetoric |

| Founder-as-prophet posture | Operator-as-mechanic posture |

This is the framing pattern that survives the booing test. Not because it apologizes for AI. Because it treats the audience as adults who have already made up their minds about whether AI is "good" — and who now want to know which specific tool, in which specific context, with which specific failure modes, is worth their time.

The Hacker News tell

Worth noting: HN's response to the booing story was not pro-Caulfield. The top comments were either contextual ("Tavistock Group, of course UCF would react") or agreeing-with-the-students-but-resentful-of-the-coverage ("the framing is dumb, but so is the speaker"). The dev surface is not pro-inevitability either. It is bored by the inevitability discourse because it has been shipping with the tools for two years. The Reddit surface is angry at the inevitability discourse because it is being deployed against them as workforce justification.

These are two different forms of disagreement, and they imply two different copy strategies:

- For developer audiences: drop the inevitability rhetoric because it's boring. Lead with capability specifics, benchmarks, and trade-off discussions. The HN audience will skim past anything that reads like a press release.

- For consumer audiences: drop the inevitability rhetoric because it's enraging. Lead with concrete utility, honest limitations, and explicit acknowledgement of the workforce dislocation conversation. The Reddit audience will hate-share anything that reads like a Tavistock Group commencement speech.

Both audiences want the same thing from copy: less performance, more substance. The booing makes the consumer-side version of that demand explicit. The Andreessen episode is the artifact that demonstrates how easy it is to miss it.

What the next 6–12 months look like

We are confident enough in this thesis to make four near-term predictions:

- Mainstream-press AI coverage will shift further toward consequence-framing. Watch for the NYT / Atlantic / New Yorker angle to converge on "what is being lost" rather than "what is becoming possible." The booing video is too cinematic for the cycle to ignore.

- At least one major tech-company commencement speaker will be cancelled or quietly swapped within the next twelve months. The Caulfield clip is now a reusable asset for student governments planning protests.

- Consumer AI products will start shipping copy that explicitly disclaims the inevitability frame. The first major brand to lead with "AI is a tool, not a revolution" will get a six-month earned-media bump.

- VC long-form will get further out of phase, not closer. The Andreessen-Torenberg episode is a leading indicator, not a course-correction. The next Sequoia / a16z thesis essays will double down. The dissonance with the mainstream surface will widen before it narrows.

The Polymarket version of this thesis is harder to construct (no clean betting market on "tone of mainstream AI coverage"), but the proxies — Gen Z favorability, AI-attributed layoff counts, top-of-Reddit-week sentiment — all point the same direction.

The single most actionable line from the week

It comes not from Andreessen and not from the booing crowd. It comes from a HN comment buried 80 deep in the original thread:

"The speaker isn't wrong about industrial revolutions. She's wrong about which side of one she's standing on."

That is the framing that would have survived the booing. That is the framing that survives the Gallup data. And — perhaps tellingly — that is roughly the framing Andreessen almost lands at the end of the Golden Age episode, when he gestures toward "increased capability tends to expand work rather than eliminate it" but doesn't quite name the corollary: that the expansion and the elimination happen on different timelines, to different people, and that the people on the wrong side of the gap are the ones doing the booing.

The cultural split is not a temporary mood. It is a structural feature of where we are in the AI rollout. Builders who calibrate to it will ship better copy. Builders who don't will get booed.

About ComputeLeap Team

The ComputeLeap editorial team covers AI tools, agents, and products — helping readers discover and use artificial intelligence to work smarter.

💬 Join the Discussion

Have thoughts on this article? Discuss it on your favorite platform:

Related Articles

Anthropic at $1T: The Standard Oil Comparison Sticks

Why the Standard Oil framing of Anthropic is hardening — $1T valuation, 80x ARR, the SpaceX compute deal, and Polymarket pricing the top two AI slots.

Google's $40B Anthropic Bet: What It Means for Developers

Google's $40B Anthropic investment loops back as Google Cloud spend. Here's what it means for developers building on Claude.

Meta's Real Story Isn't the Layoffs. It's the Surveillance.

Meta cut 10%, Microsoft bought out 7%, Block gutted 40%. But the bigger story is Meta watching its own staff with AI to replace them.

The ComputeLeap Weekly

Get a weekly digest of the best AI infra writing — Claude Code, agent frameworks, deployment patterns. No fluff.

Weekly. Unsubscribe anytime.